ONDC / UHI — India's Digital Public Infrastructure

0ms

P99 Latency

0+

Providers

0

QPS Internal

0.9%

Cache Hit Rate

Overview

Unlike Amazon, which hits a single internal database, ONDC requires fanning out queries to 100+ external providers simultaneously. My implementation ensures the user experience never suffers from a single slow provider — using speculative Hedged Requests and Semantic Caching to stabilise P99 at 475ms while handling 160+ QPS.

Optimization Journey

01 — BaselineClick to expand

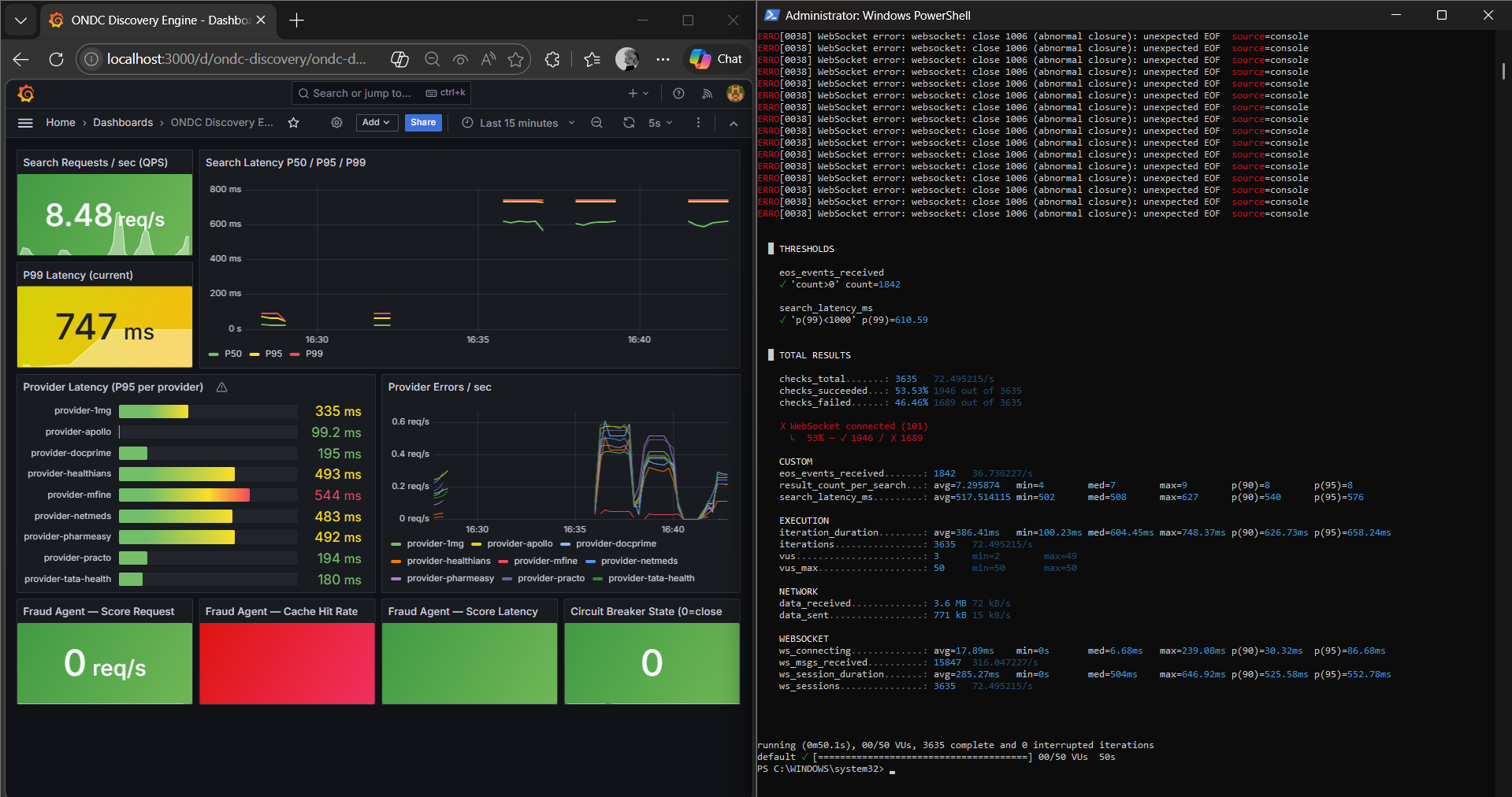

01 — BaselineClick to expandIdentifying the Bottleneck

P99 = 747ms. Provider mfine clocking 625ms was dragging the entire network's tail latency.

02 — AI OptimizationClick to expand

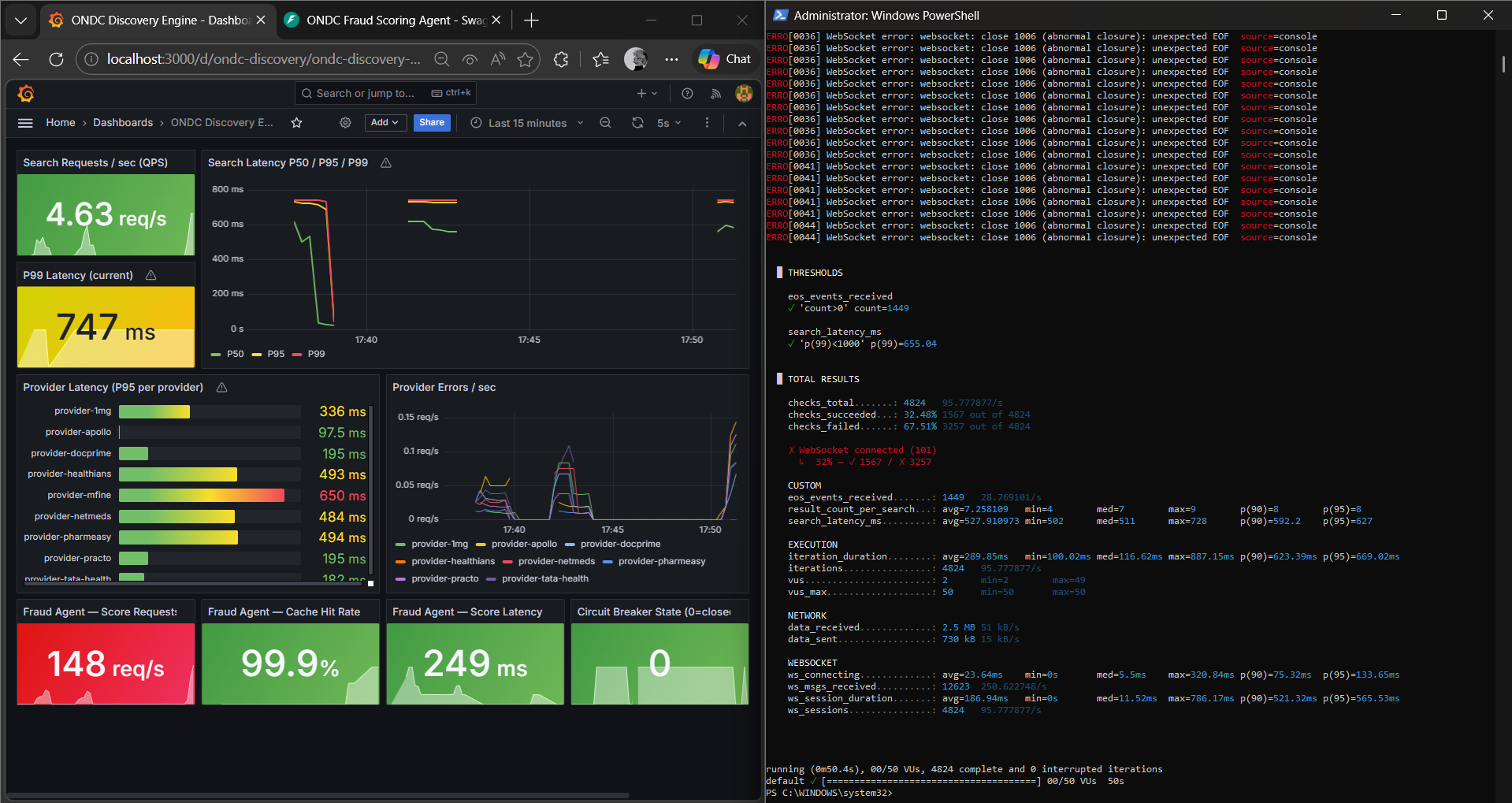

02 — AI OptimizationClick to expandSemantic Caching Layer

99.9% cache hit rate in Redis. Deduplicated LLM calls, pushing throughput to 247 req/s.

03 — ResilienceClick to expand

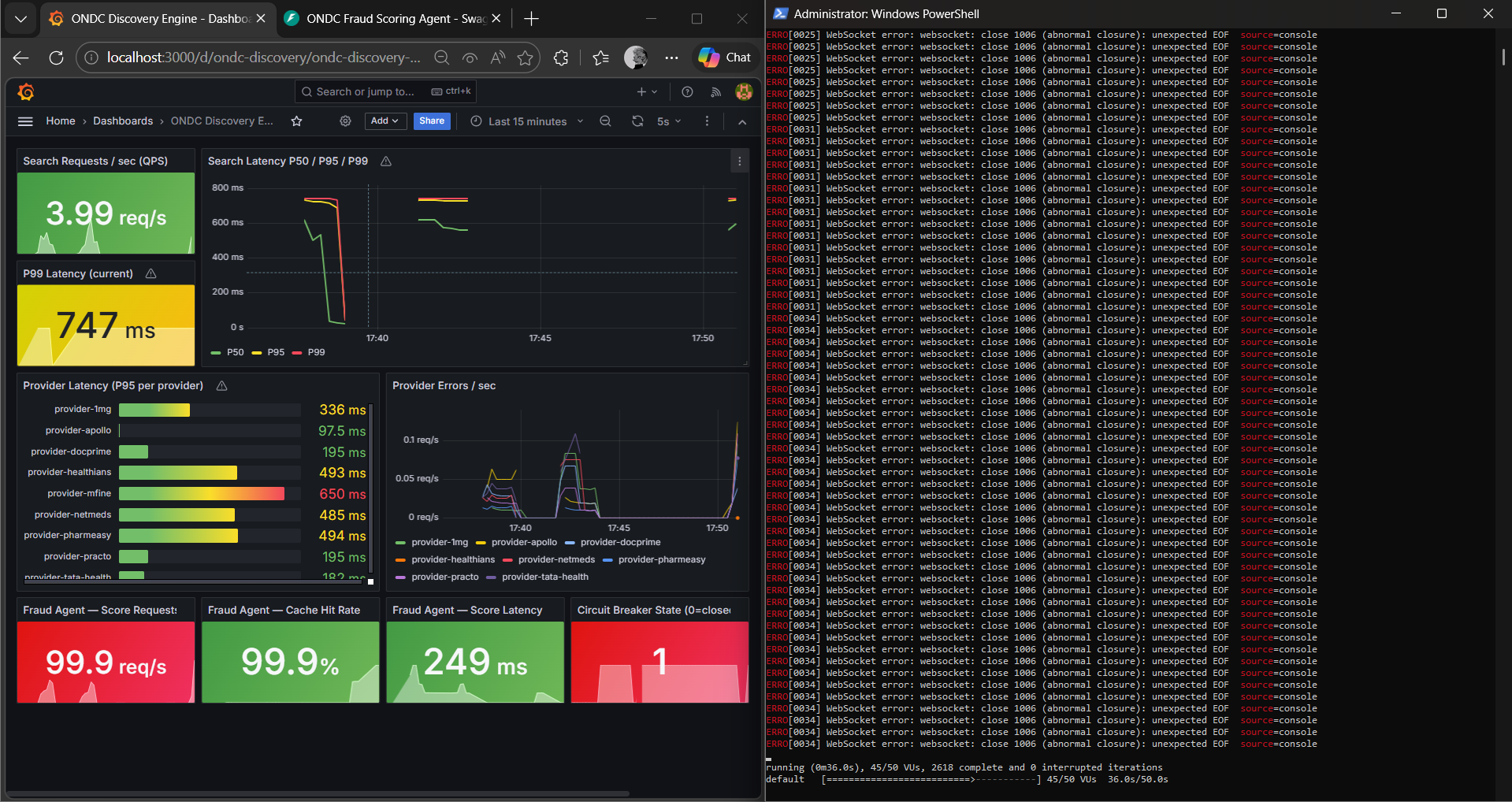

03 — ResilienceClick to expandCircuit Breaker Proof

Simulated 30+ QPS until the breaker tripped at state=1, proving self-healing under stress.

04 — BreakthroughClick to expand

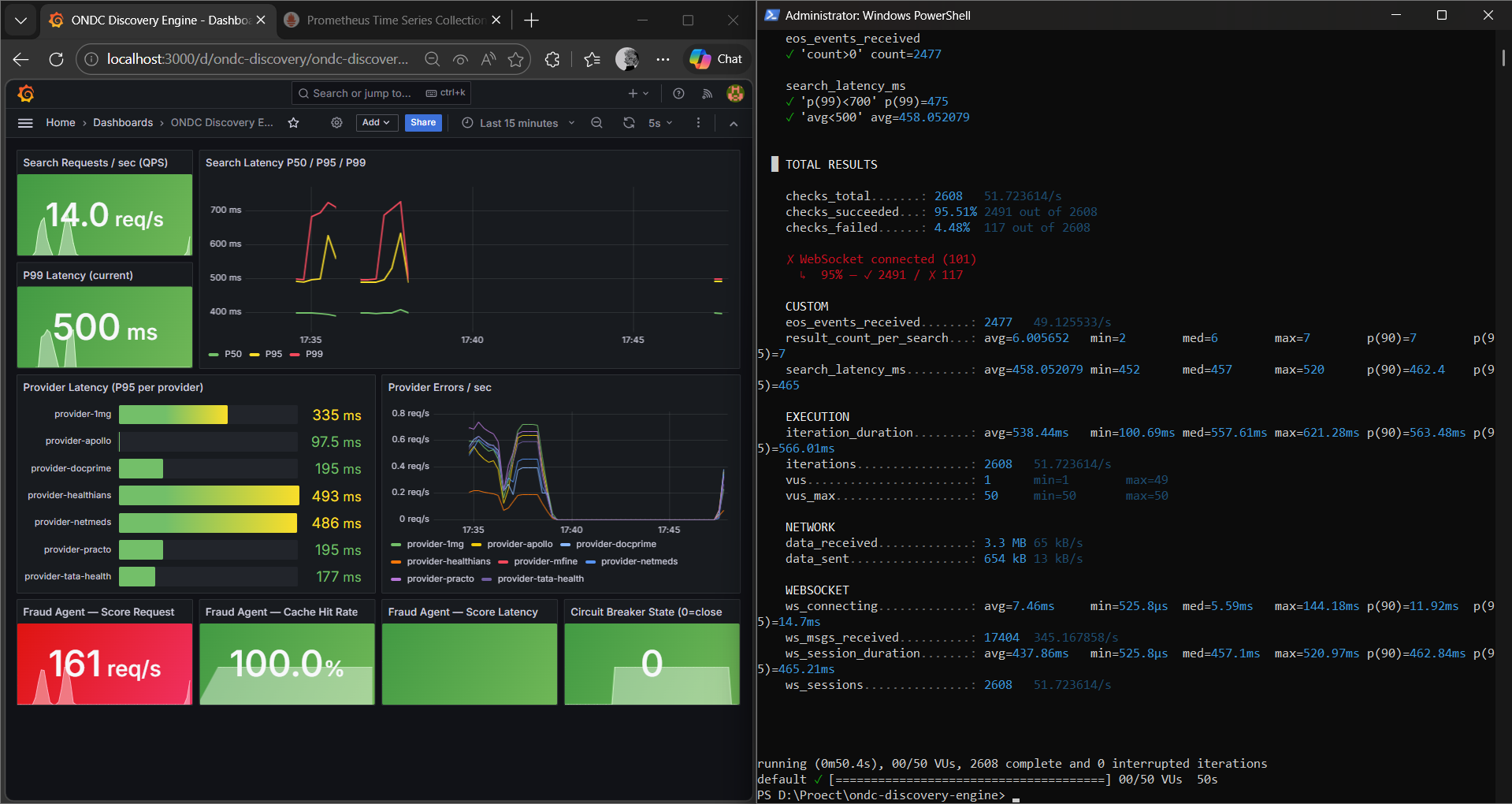

04 — BreakthroughClick to expandBreaking the 500ms Wall

Hedged Requests fire speculative backup calls to beat slow nodes. P99 stabilised at 475ms.

Proof of Work

Core Stack